How it works

From PRD to report.

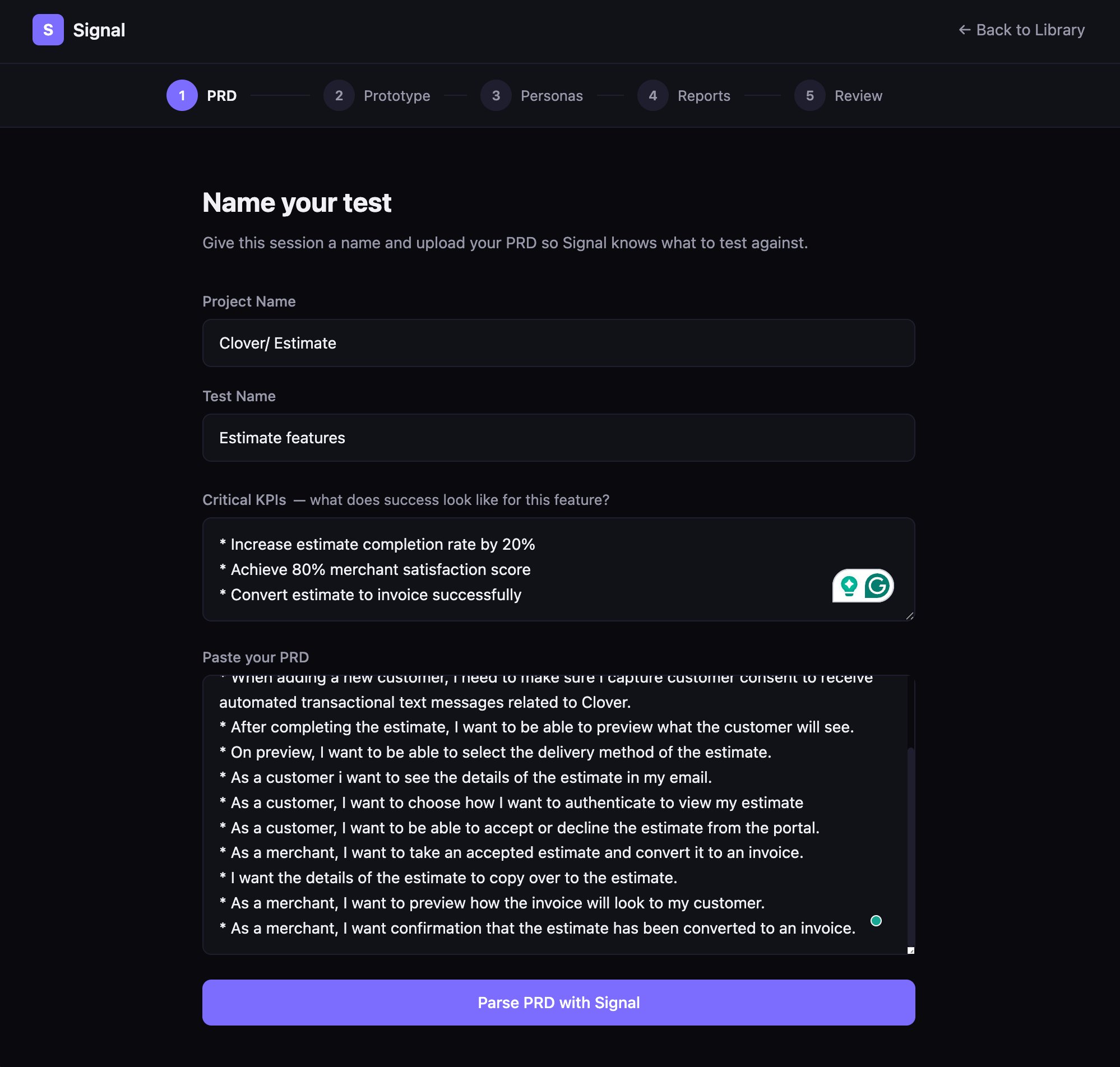

01

Upload your PRD

Signal parses the requirements document to understand the intended experience, user goals, and success criteria before generating any personas.

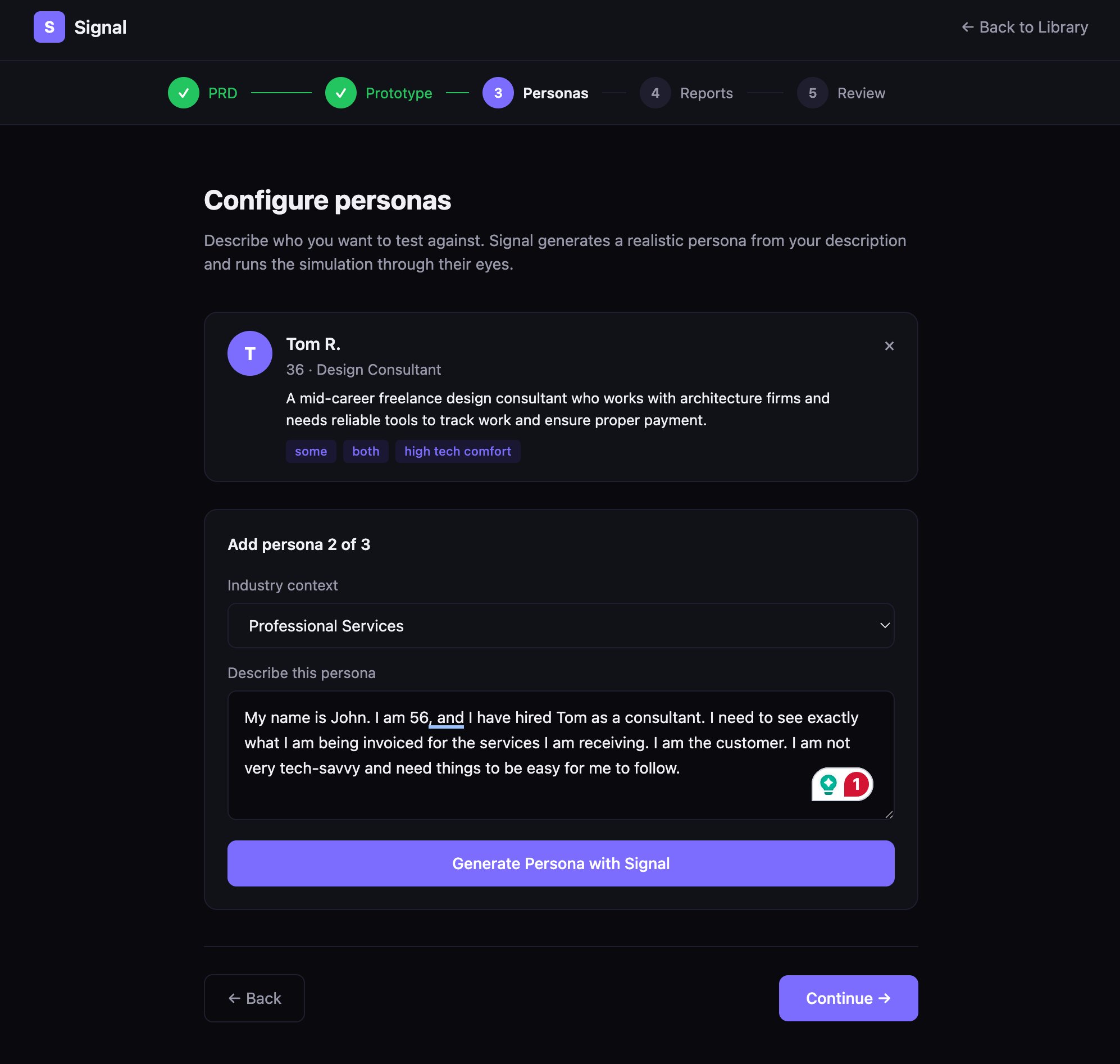

Web App + Plugin02

Build your personas

You define the persona details and map each one to an industry. Signal generates a structured persona card from your input, which Claude uses as the lens for the simulation.

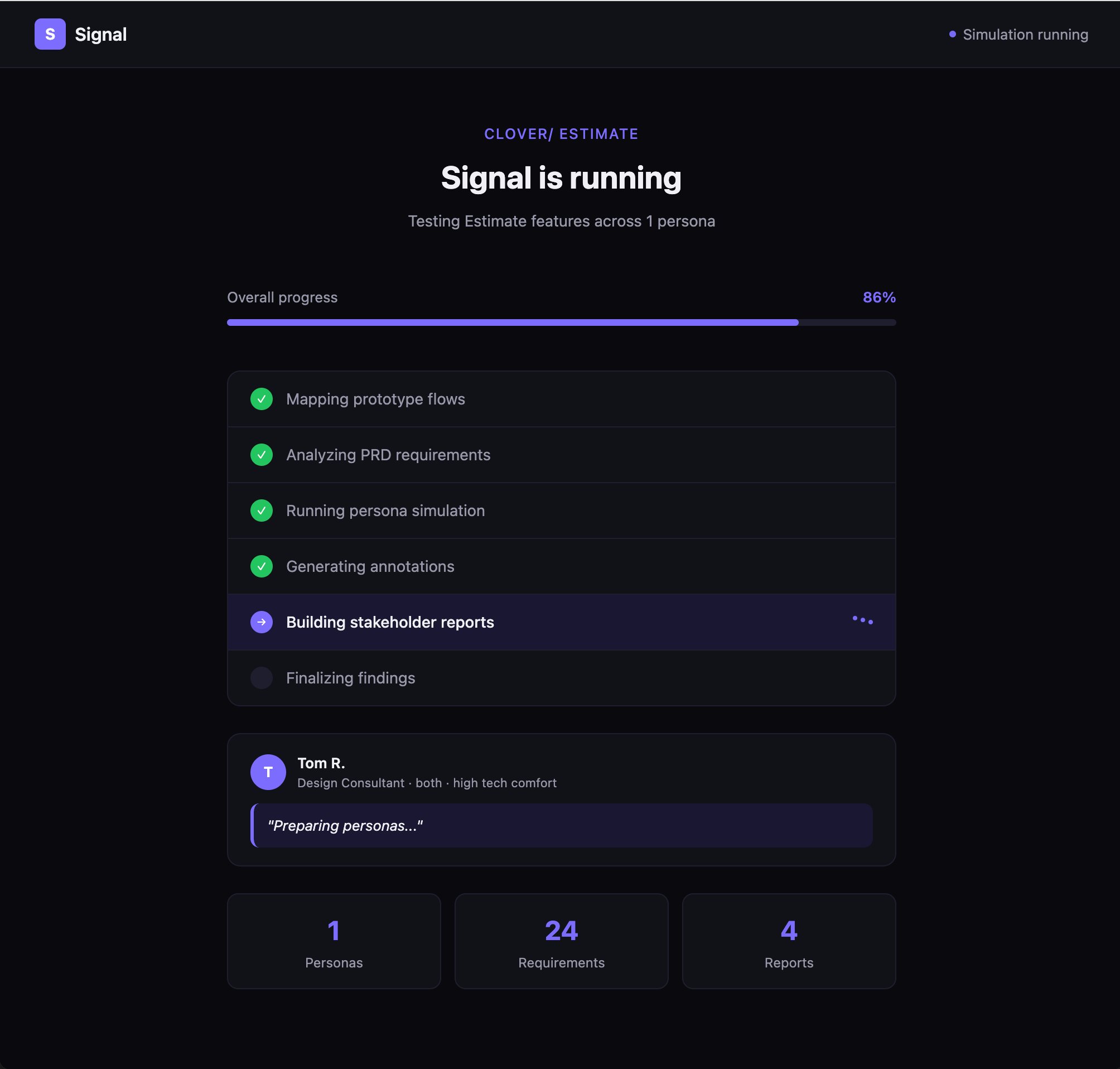

Claude API03

Run the simulation

Claude receives the actual Figma frames as images via the plugin, sees what's on screen, and maps findings back to specific frames in the flow.

Claude Vision04

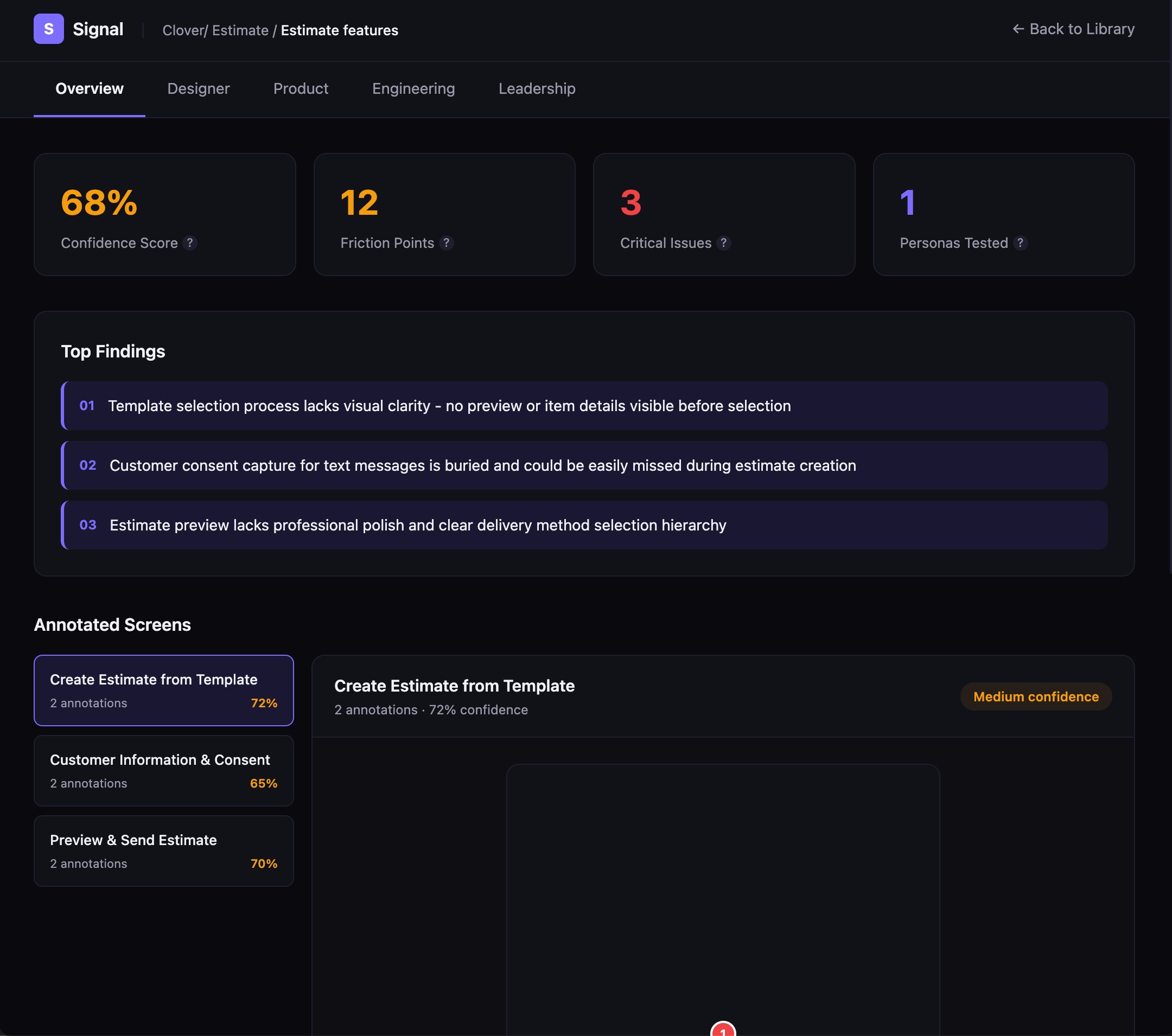

Get the report

Four simultaneous outputs: Designer, Product, Engineering, Leadership. Each formatted for how that audience acts on information, not a single report everyone decodes on their own.

4-Tab Output